An AI Reinforcement Learning Expert Advisor is an advanced type of AI based EA trading robot used in algorithmic trading on MetaTrader (MT4/MT5), where decision-making is not based on fixed rule sets but on continuous learning from market outcomes. Unlike rule-based trading bots that follow static conditions (for example, predefined indicator thresholds), RL-based EAs dynamically adjust their trading logic by analyzing past and current market behavior. At 4xPip, we build these EAs through our workflow, where the strategy provided by the trader/EA owner is converted into an adaptive trading system trained using Machine Learning (ML), Deep Learning (DL), and Reinforcement Learning (RL) techniques.

Real-time market adaptation refers to the EA’s ability to respond instantly to changing volatility, liquidity shifts, and evolving price structures, conditions that are constant in financial markets. Instead of relying on fixed logic, an RL-based EA improves performance through a reward and penalty system, learning which trade actions increase profitability and which lead to losses. In our 4xPip AI-based EA trading robot development process, this feedback-driven learning allows the bot to continuously refine entries, exits, and risk decisions, making it suitable for fast-changing market environments where adaptability is critical.

Reinforcement Learning in Trading Systems

Reinforcement learning in trading systems is built around four core elements: an agent, environment, actions, and rewards. In simple trading terms, the agent is the AI based EA trading robot developed by our team at 4xPip, while the environment is the live market on MetaTrader (MT4/MT5). The agent observes market conditions using the defined strategy (candlesticks, indicators, and news data), then takes actions such as executing trades and receives feedback in the form of profit or loss, which acts as a reward signal.

Unlike supervised learning, which learns from labeled historical data, or unsupervised learning, which finds hidden structures in data without trade execution feedback, reinforcement learning directly learns from trading outcomes in real time. This makes it highly effective for adaptive systems where market behavior constantly changes. In an RL framework, trading decisions are simplified into actions: Buy, Sell, or Hold, where each action is evaluated based on its resulting profit or drawdown. The EA then adjusts future decisions to maximize cumulative reward while minimizing risk exposure.

Market Data Inputs Used for Real-Time Adaptation

Market data inputs for real-time adaptation in a reinforcement learning EA include price action (OHLCV), tick volume, order book depth, and volatility indicators such as ATR and standard deviation. In an AI based EA trading robot developed through our 4xPip programmer/developer workflow, these inputs form the live “environment state” that the Bot continuously evaluates on MetaTrader (MT4/MT5). Combined with a defined Strategy, this allows the system to detect micro market shifts like breakout pressure, liquidity imbalances, and volatility expansions before executing Buy/Sell decisions.

Real-time data feeds differ significantly from historical datasets used in training. Historical data is used to train and validate the model, while real-time feeds are streamed for live decision execution and adaptation. The key factor in effective RL performance is low-latency data processing, where market updates are analyzed within milliseconds to avoid slippage and outdated signals. In 4xPip AI based EA trading robot systems, optimized data pipelines ensure fast synchronization between live market conditions and decision logic, enabling accurate trade execution under rapidly changing volatility conditions.

Reward Systems and Feedback Loops in EA Learning

Reward systems and feedback loops in EA learning are built on measurable trading outcomes where profit, loss, and risk-adjusted returns act as core reward signals. In an AI based EA trading bot developed through the 4xPip framework, each executed trade is evaluated against the defined Strategy on MetaTrader (MT4/MT5), where profitable outcomes increase reward scores while inefficient trades reduce them. This allows the Expert Advisor to continuously align decision-making with long-term profitability rather than isolated trade results.

To maintain trading discipline, the system applies structured penalties for drawdowns, high-risk exposure, and overtrading behavior, ensuring the EA avoids unstable market actions. In 4xPip reinforcement-based models, these penalties are directly tied to risk metrics such as volatility spikes and loss streaks, which helps stabilize performance across changing market conditions. Continuous feedback loops refine the AI model over time, allowing it to adjust trade entries, exits, and position sizing based on accumulated market experience, improving overall decision accuracy with each iteration.

Dynamic Strategy Adjustment During Market Volatility

Reinforcement Learning (RL) within the AI based EA trading robot developed at 4xPip continuously evaluates market behavior using volatility signals such as ATR, price momentum, and candlestick structure from the last 10 years of historical dataset. This allows the Bot on MetaTrader to detect shifts between ranging and trending regimes in real time, adjusting its Strategy accordingly without manual input from the Trader. Within the 4xPip framework, the developer ensures the model recognizes when market conditions become unstable or directional strength increases, enabling adaptive decision-making based on live market structure.

During high volatility phases like news spikes or liquidity drops, the AI shifts execution style dynamically, for example, moving from swing-based positioning to fast scalping behavior or temporarily reducing exposure when risk penalties increase under the Reward = Profit – Loss – Risk Penalty system. In stable conditions, it reverts to broader trend-following logic, optimizing entries and exits with higher holding periods. This continuous feedback loop allows the AI based EA trading robot to refine itself over time, improving execution quality across all market conditions including breakout, consolidation, and sudden economic event-driven movements.

Exploration vs Exploitation in Live Trading Decisions

In Reinforcement Learning (RL) based trading systems, the core decision tension is between exploration (trying new trade actions or strategies to discover better opportunities) and exploitation (using already proven profitable actions). Exploration helps the bot avoid stagnation in a changing market, while exploitation focuses on maximizing returns from historically successful patterns. In our 4xPip AI based EA framework, this balance is learned directly from long-term market behavior using the reward signal structure derived from profit consistency, drawdown control, and risk-adjusted outcomes.

To manage this in live MetaTrader environments, RL models like DQN, PPO, and SAC use controlled randomness techniques such as epsilon-greedy policies, where the system occasionally tests new actions instead of always repeating the best-known trade. Probabilistic decision-making (softmax action selection) also ensures trade selection is distributed based on confidence levels, not fixed rules. This allows the EA developed by our team to adapt dynamically, refining Strategy execution over time while still protecting capital through risk-aware decision thresholds.

Risk Management and Stability in Real-Time RL Trading

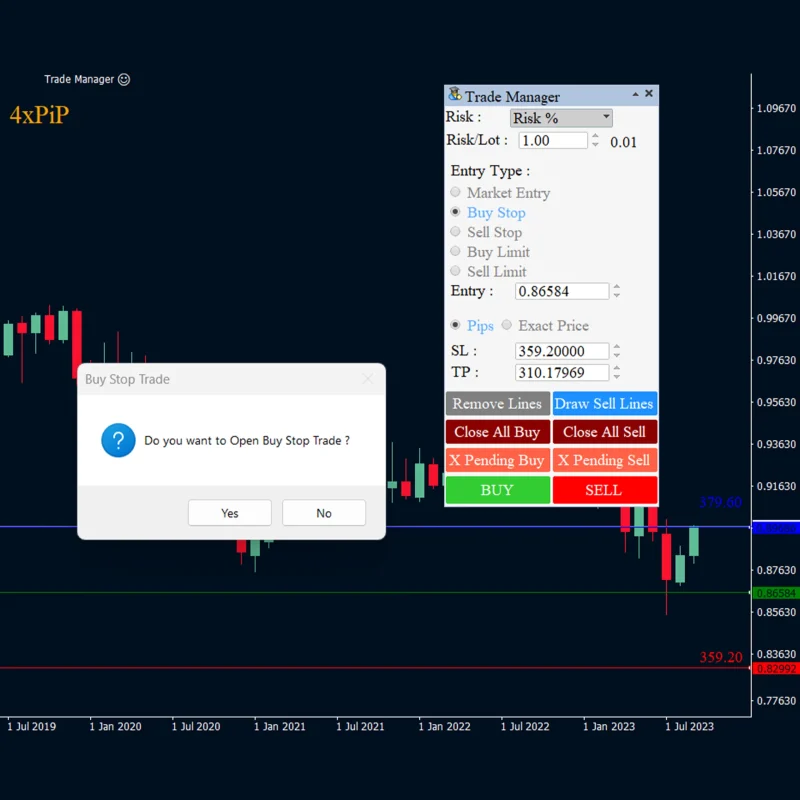

In real-time RL trading systems, Risk Management is enforced directly inside the Expert Advisor logic built by our 4xPip team. Capital protection is handled through dynamic stop-loss placement, volatility-based position sizing, and exposure limits per trade. The Strategy does not only decide entry and exit but also calculates optimal Stop Loss (SL) and Take Profit (TP) levels using market conditions, ensuring losses remain controlled while preserving upside potential in MetaTrader (MT4/MT5) execution environments.

To prevent overfitting to short-term market noise, the AI model uses constraints like reward clipping, L2 regularization, and action penalties that discourage excessive sensitivity to random price spikes. This ensures the AI based EA trading bot trained on 10+ years of historical dataset maintains stable behavior across different regimes. During extreme volatility events like crashes or liquidity gaps, the system automatically reduces position size or switches to conservative decision thresholds, allowing the Expert Advisor to maintain execution stability while still adapting intelligently to real market conditions.

Summary

An AI Reinforcement Learning EA is an advanced automated trading system designed for MetaTrader (MT4/MT5) that continuously adapts to real-time market conditions instead of relying on fixed rules. It learns from live trading outcomes using a reward and penalty mechanism, where profitable trades reinforce successful behavior and losses guide adjustments. By analyzing dynamic market data such as price action, volatility, and volume, the system refines its entry, exit, and risk management decisions over time. This allows the EA to adjust effectively during different market conditions, including high volatility and stable trends, while maintaining strong risk control and improving performance through continuous learning.

4xPip Email Address: [email protected]

4xPip Telegram: https://t.me/pip_4x

4xPip Whatsapp: https://api.whatsapp.com/send/?phone=18382131588

FAQs

- What is an AI Reinforcement Learning Expert Advisor in trading?

An AI RL Expert Advisor is a trading bot that learns from market outcomes instead of following fixed rules. It continuously improves its decision-making based on rewards and penalties from past trades. - How is RL-based trading different from rule-based trading bots?

Rule-based bots follow static conditions like indicator signals, while RL-based systems adapt dynamically by learning from real-time market behavior and trade results. - What platforms support AI Reinforcement Learning EAs?

These systems are commonly deployed on MetaTrader platforms such as MT4 and MT5, where they execute automated trades based on live market data. - How does the RL trading system learn from the market?

It learns through a reward system where profitable trades reinforce successful actions, while losses and risks act as penalties that adjust future behavior. - What type of market data does an RL EA use?

It uses real-time inputs such as OHLC price data, tick volume, order book depth, and volatility indicators like ATR and standard deviation. - What is the role of exploration and exploitation in RL trading?

Exploration allows the system to test new strategies, while exploitation focuses on using proven profitable strategies to maximize returns. - How does the EA adjust during high market volatility?

During volatile conditions, the EA can reduce risk, adjust position sizing, or switch trading styles such as moving from swing trading to scalping. - How is risk managed in an RL-based trading system?

Risk is controlled through stop-loss settings, dynamic position sizing, exposure limits, and penalties for excessive drawdowns or overtrading. - Why is real-time adaptation important in trading?

Markets change rapidly due to volatility, liquidity shifts, and news events. Real-time adaptation helps the EA respond instantly and maintain performance stability. - Can an RL-based EA improve over time?

Yes, it continuously improves by analyzing past and current trades, refining its strategy, and adjusting decisions based on accumulated market experience.